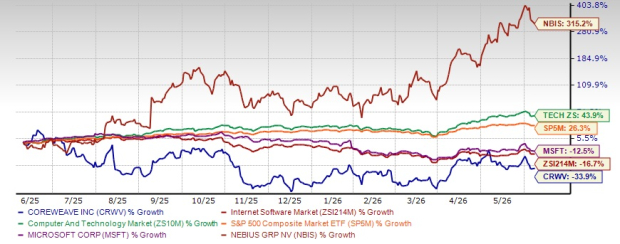

Cerebras, an artificial intelligence startup based in Sunnyvale, Calif., unveiled Cerebras Inference, positioning it as the world’s swiftest AI inference solution.

Claiming a monumental 20x faster performance compared to NVIDIA GPU-based hyperscale clouds, Cerebras Inference blazes through computations, offering 1,800 tokens per second for Llama3.1 8B and 450 tokens per second for Llama3.1 70B.

Cerebras Inference is engineered with the cutting-edge third generation Wafer Scale Engine, conferring a striking advantage in both speed and cost-efficiency. Tackling the memory bandwidth bottleneck head-on, Cerebras stores the entire model on its colossal chip.

“Cerebras has surged ahead in Artificial Analysis’ AI inference benchmarks,” exclaimed Micah Hill-Smith, co-founder and CEO of Artificial Analysis. “Delivering speeds dwarfing GPU-based solutions, Cerebras hits new heights in processing Meta’s Llama 3.1 8B and 70B AI models.”

Ahead of its much-anticipated initial public offering, Cerebras appointed industry luminaries Glenda Dorchak and Paul Auvil as new board members. Notably, Bob Komin, former CFO of Sunrun, joins as the CFO.

“Bob Komin brings a wealth of operational expertise to Cerebras, having played pivotal roles in pioneering technical and business innovations,” shared Andrew Feldman, CEO, and co-founder of Cerebras. “His financial stewardship in growth-stage and public firms will be a game-changer for Cerebras.”

5 Stocks Our Experts Predict Could Double In the Next Year

By submitting your email, you'll also get a free pivot & flow membership. A free daily market overview. You can unsubscribe at any time.